Security scanning should be part of the delivery flow, not an afterthought. In this post I show how I automated OWASP ZAP scans in Azure DevOps release pipelines and turned the findings into something teams can actually act on.

The approach uses a ZAP Docker container to run baseline scans, a PowerShell script to map findings to OWASP Top 10 categories and convert them to NUnit format, and the standard Azure DevOps publish-test-results task to surface vulnerabilities as visible test failures in your pipeline.

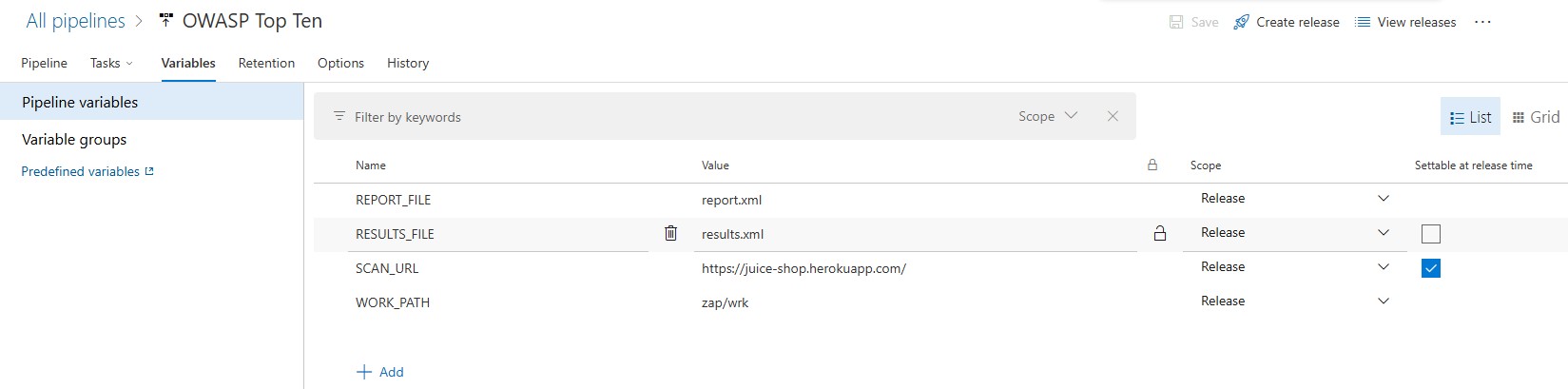

Defining Pipeline Environment Variables

The process begins by defining pipeline variables that will be used by the pipeline tasks. Here is a screenshot of the pipeline variables configuration:

The following variables are defined:

SCAN_URL: This is the website URL that will be scanned by the ZAP scanner.REPORT_FILE: This is the file name of the report that will be generated by the ZAP scanner.RESULTS_FILE: This is the NUnit formatted file that will be generated by the transformation task.WORK_PATH: This is the folder in which theREPORT_FILEand theRESULTS_FILEwill be created.

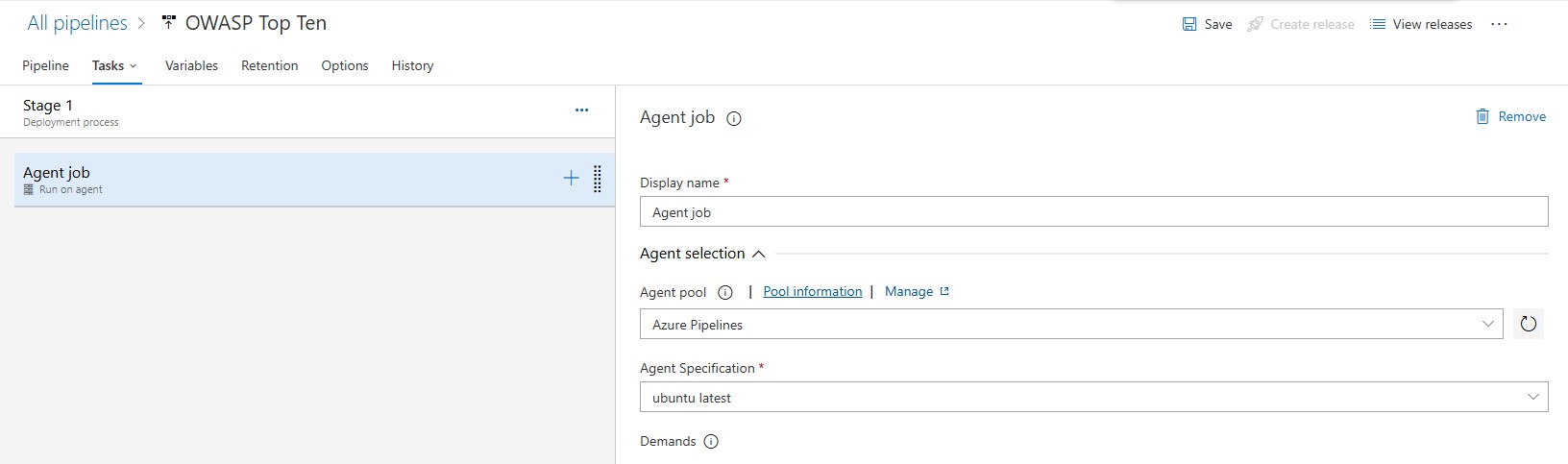

Defining the Agent Job

Next, it is important to define the correct parameters for the agent job. Since we are going to be running both Bash and PowerShell scripts, we will choose the Azure Pipelines agent pool and the ubuntu-latest agent specification as shown in the screen below:

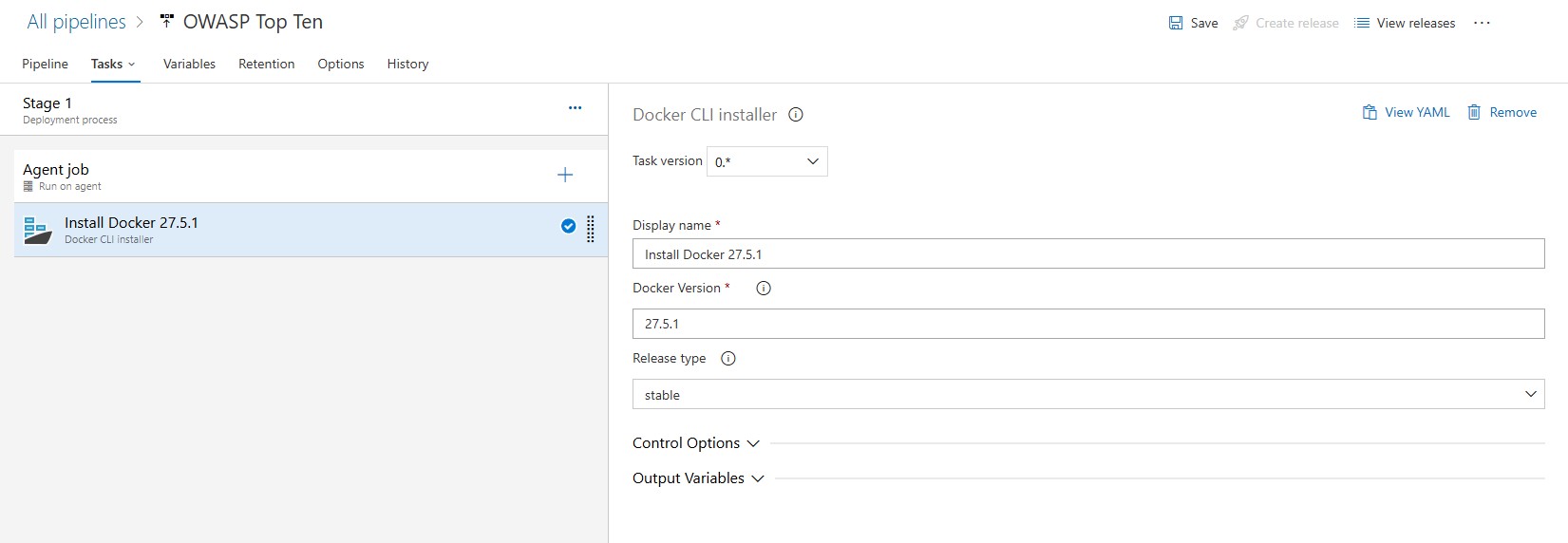

Installing Docker in the Agent Machine

In order to run docker commands, the docker engine will need to be installed on the agent machine. This is done by adding the Docker CLI Installer task:

NOTE: Visit the Docker Engine release notes page to find the latest version of the docker engine.

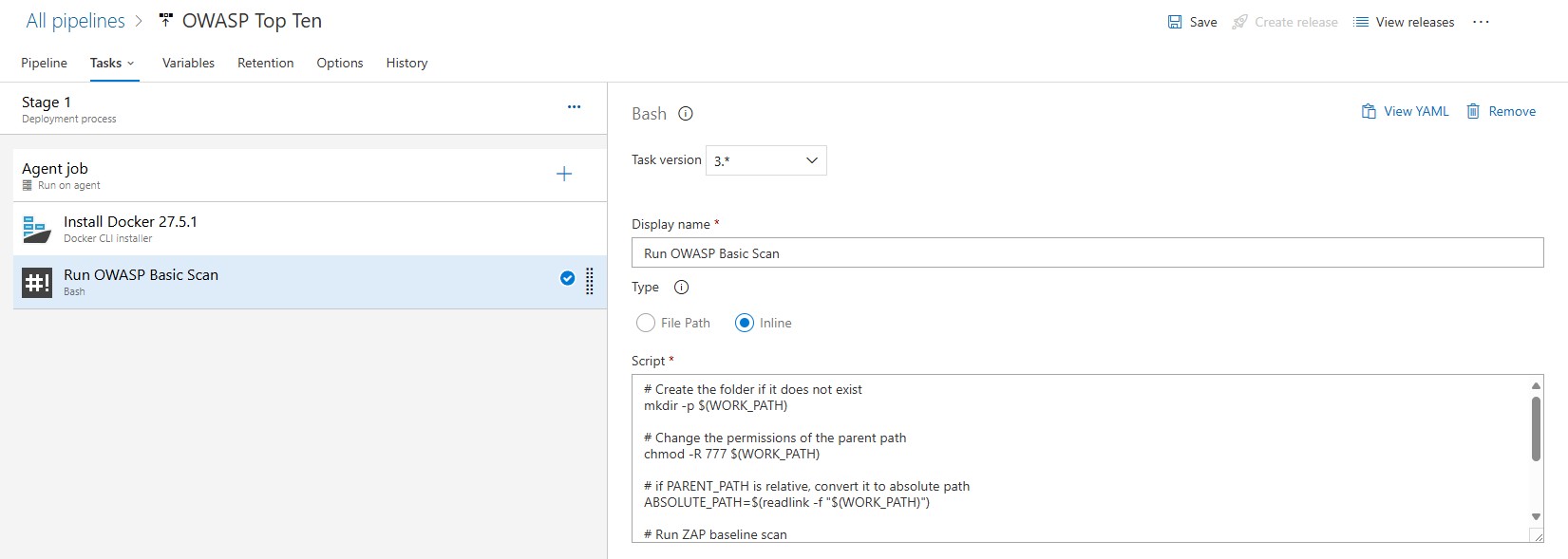

Running the OWASP ZAP Docker container

The next step uses a pre-configured OWASP ZAP Docker container:

Using this container, we can run baseline scans against our web applications to detect potential vulnerabilities.

Here’s the full script for the baseline scan command:

| |

This command mounts a working directory to store the results and scans your application’s URL for common vulnerabilities. The results are generated in an XML format by default and stored in a file defined in the REPORT_FILE variable and located in the folder defined by the WORK_PATH variable.

Converting ZAP Results to NUnit Format

While the XML report is detailed, integrating its results into your pipeline can still be awkward. To make that easier, we’ll transform the ZAP results into NUnit format. NUnit is widely supported by CI/CD tools and makes test outcomes easier to inspect.

In addition, we are only interested in the Top Ten OWASP Vulnerabilities, so this step also filters outcomes by the Top 10 categories.

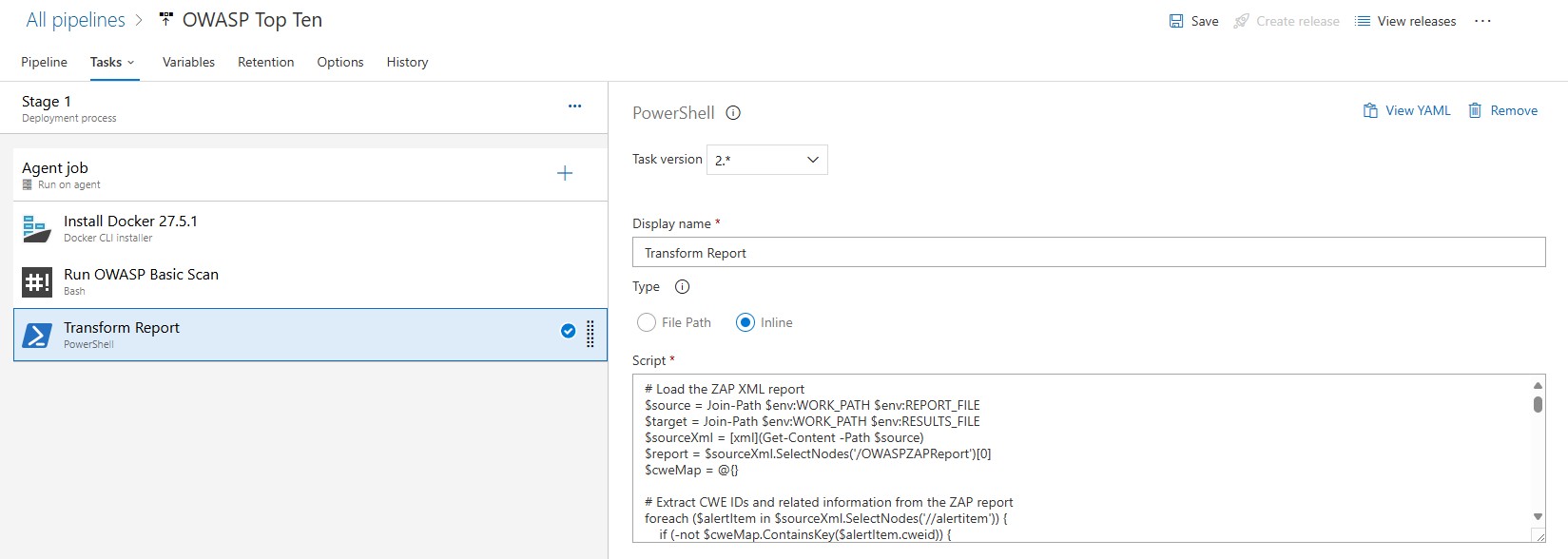

This is achieved using a custom PowerShell script. The script extracts key details from the ZAP XML report, maps vulnerabilities to the OWASP Top 10 categories, and converts the data into a structured NUnit report.

Here’s the script in action:

| |

Key Features of the Script

- Alert Aggregation: Gathers and organizes vulnerability data from ZAP results.

- OWASP Mapping: Links CWEs from ZAP alerts to OWASP Top 10 categories. For a full list of CWE IDs and their mappings to the OWASP Top 10 categories, see the official OWASP documentation: OWASP Top Ten Mapping.

- NUnit Format Conversion: Outputs the data in NUnit format for easy integration with Azure DevOps.

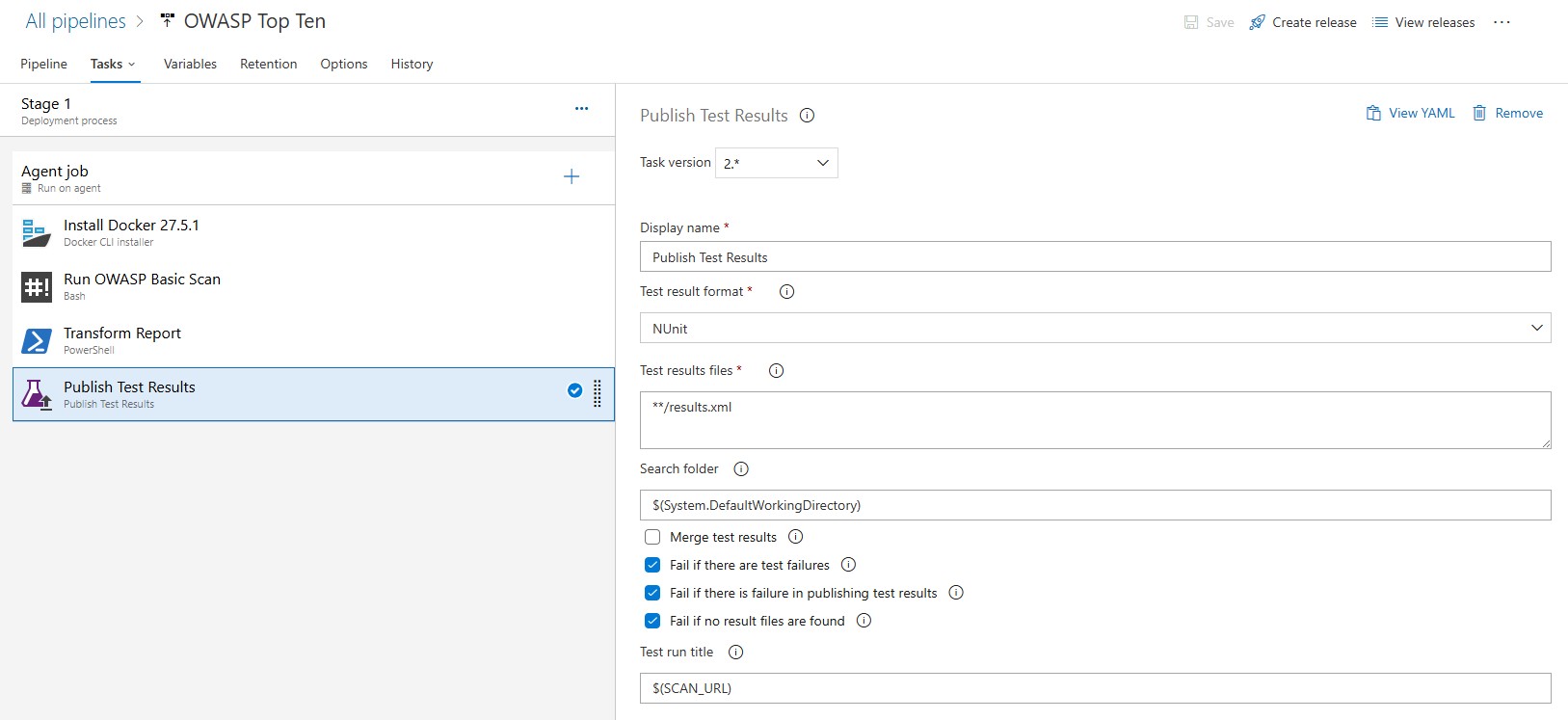

Integrating NUnit Results with Azure DevOps

Once the script generates the NUnit report (results.xml), it can be published in Azure DevOps as a test result. Add a task in your pipeline to publish the report:

This step ensures that vulnerabilities are tracked as test failures, making them highly visible to your development and security teams.

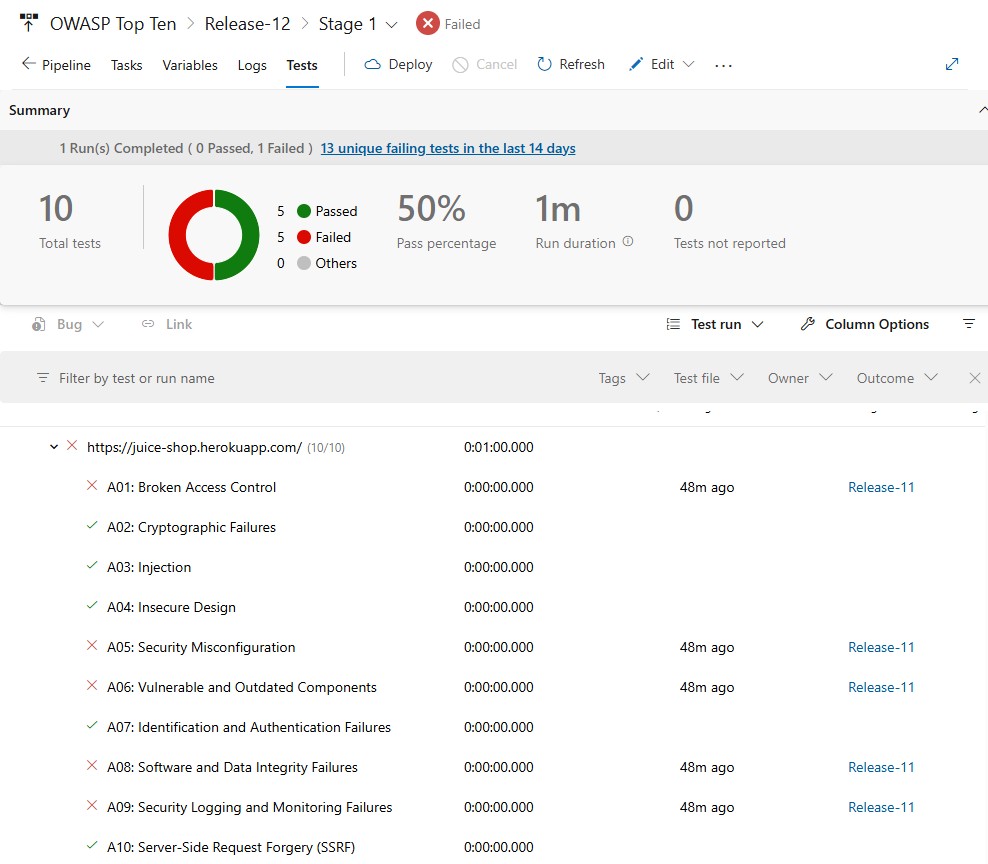

What this gets you

By integrating OWASP ZAP scans with Azure DevOps, security findings show up as test failures in the same interface as the rest of your pipeline results. The PowerShell script handles the CWE-to-OWASP mapping and NUnit conversion, so you do not have to dig through raw XML to understand what failed.

One thing I appreciate even more now than when I first wrote this post is that DAST automation is only one part of a strong DevSecOps loop. OWASP ZAP gives us runtime-facing feedback, but the workflow becomes even more powerful when it sits beside static analysis, issue routing, and remediation paths that developers can act on without leaving their normal delivery flow.

I explored that next step in How I Run SonarQube in My Own CI Pipeline (And Let AI Fix What It Finds). If this post shows one generation of automated AppSec reporting, that newer post shows how I now think about turning findings directly into reviewable engineering work.

References: